Dream is a live theatre experience exploring remote audience interactivity. It came about as one of four Audience of the Future Demonstrator projects, supported by the government Industrial Strategy Challenge Fund, which is delivered by UK Research and Innovation.

It blends game engines with motion capture and live theatre, enabling an audience to interact with the virtual setting and narrative.

Inspired by Shakespeare’s A Midsummer Night’s Dream, this project began before the pandemic, and had to undergo major pivots for delivery.

“The complexity is how we bring this together in real time,” said Sarah Ellis, Director Of Digital Development at Royal Shakespeare Company.

“How do we redefine the rituals around theatre, not through a stage, but through a world that we can be a part of,” she said.

Audiences can log in via mobile, desktop or tablet.

The project was made in collaboration with Manchester International Festival (MIF), Marshmallow Laser Feast (MLF) and Philharmonia Orchestra among other partners (more info at end of post).

The Dream User Journey

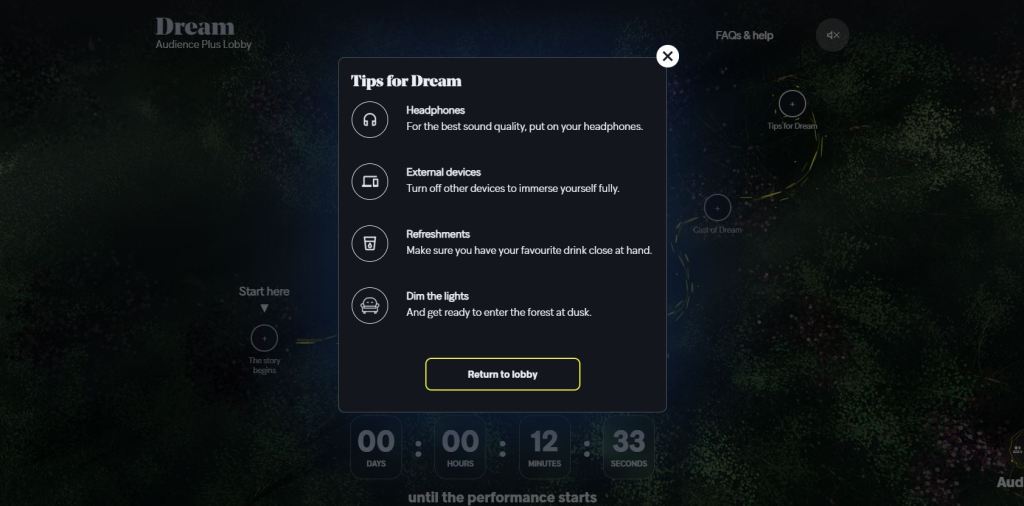

At home, I logged in to experience Dream. It brought me to a landing page, described as the Dream Audience Lobby. On this page, I could click on each of the circles to learn more about the experience, and how to interact.

Right on point for the typical theatre experience, it was suggested that I sit back, and turn my other devices off for full immersion in the experience, although a few of my friends were also logging in at their respective homes, and we set up a group call so that we could talk throughout.

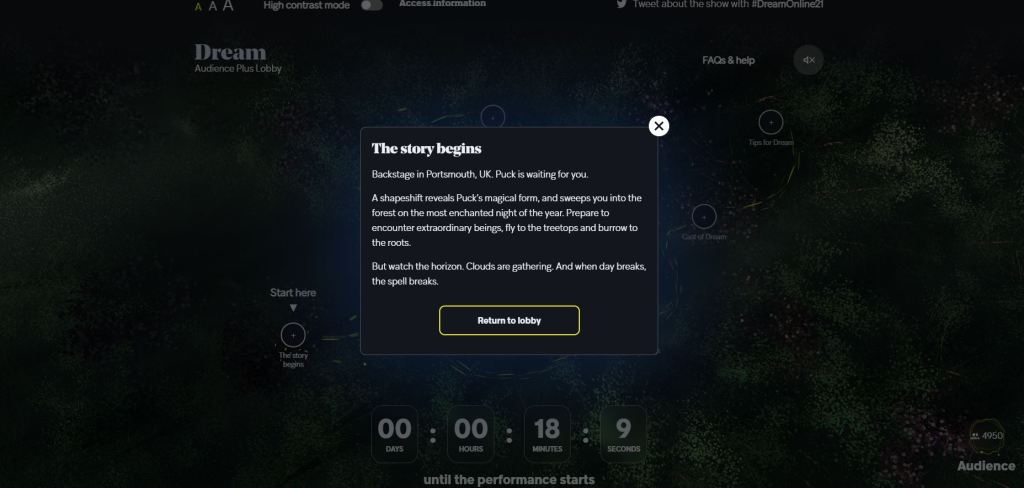

Progressing further into the pre-show lobby, the stage was set. The audience learned that the live performance was taking place in Portsmouth. Puck, the magical being in Midsummer Night’s Dream, would take us on a journey through a virtual forest, where we’d have to keep an eye out for mysterious happenings.

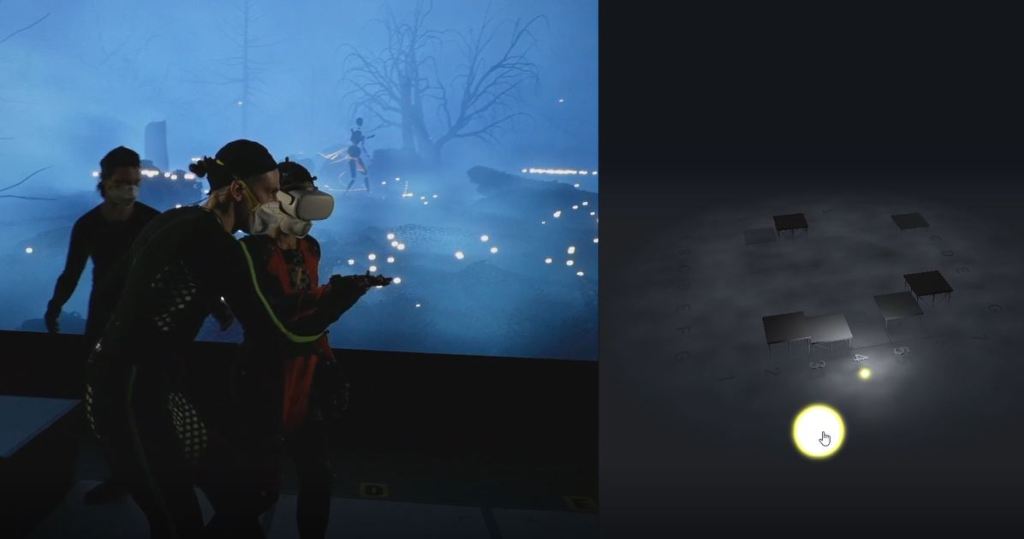

Watching on my laptop, the camera followed lead actress (EM Williams) live at the venue. In a full body motion capture suit, her movements were reflected in real time in the virtual setting. With a VR headset, she could see the virtual setting and react to the digital environment in real-time.

As Dream began, the camera shifted from the studio to a virtual camera in the game engine, capturing the virtual world and motion capture from the live actors that animated the virtual characters.

Below is the fairy Puck, a pebble stack character, as imagined for this performance of Dream.

As Puck moved around, real world blocks were placed in the live venue so that Puck could appear to climb up or down obstacles in the virtual forest. We follow Puck until it begins to get dark and stormy, and the pebble fairy meets another character, making for two real-time motion captured actors (below).

Towards the end of the play, we come to the first of the two interactive elements. The camera switches to live, capturing the venue.

“At Portsmouth, we have an LED wall that shows the fireflies to the actors so everyone is connected,” said Ellis.

Then my screen split into two. On the right was an interactive component that said “drag me”. I clicked on it and a light, my firefly, popped up on the screen on the right.

Having done some reading beforehand, I understood that the lights, or fireflies, now appearing on the backdrop were other audience members’ fireflies, but I was a bit confused as to how the interactivity worked. I dragged the button around a bit, but nothing I did seemed to have any effect. At the venue, the stage assistants guided Puck around.

Eventually the interactive component disappeared and the play resumed. We followed Puck on the virtual camera, through more of the forest, along a perilous journey with various forest spirits.

Wrapping up, the play ended with the audience being able to interact again to plant seeds of light in the forest that made blossoms and trees appear everywhere.

This time, by chance, I let go of holding onto my click and my light catapulted forward on the right side of the screen. I assume this means that my light seed was planted on the left hand side of the screen too, and the interactive element disappeared.

The camera switched back to the studio, where the actors took their bows and the studio crew clapped. There was a lot of excitement in the studio, and there was an interview that followed where the actors were able to talk about their experience and the making of Dream.

The performance lasted 25 minutes, and the entire experience with interviews and intro was 50 minutes.

The Pivot to Virtual Distribution

“With our pivot to only distribution, it’s brought up as many opportunities as it has challenges,” said Ellis.

“We’ve always assumed that the most important type of connection is the live experience. In the pandemic you’ve removed that aspect,” said Ellis.

“In some ways the audience has more agency than they ever have had, and not just through the stage, but through a virtual world they can be a part of,” she said.

“It will give you a unique opportunity to directly influence the live performance, from wherever you are in the world,” she said.

There were additional R&D elements in the project, such as testing commercial models for this type of experience at scale. Interactive tickets cost £10, and spectator only tickets were free.

“Reaching mass audiences means testing willingness to pay and spectrums of payment and what that looks like,” said Ellis.

“We need to put work out there that’s risky and experimental,” she said, naming the biggest challenge as keeping the consortium together.

““You’ve got to give people space to work it out, creating the right conditions and culture for it.”

The performances took place from March 12 to 20. Dream also featured an interactive symphonic score, made possible with Gestrument technology, that responded to the actor’s movement during the play.

Research and data gathered will be shared with the public.

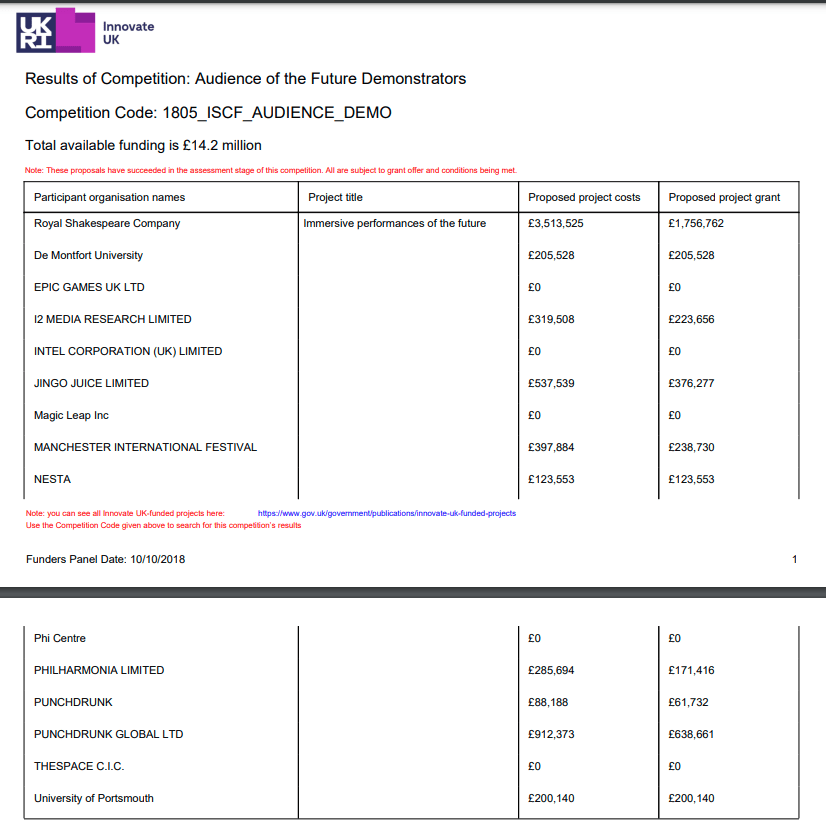

A general breakdown of costs and public funding for the project can be found below: