What do Deadpool 2, Blade Runner 2049, Far Cry 5, Justin Timberlake’s “filthy” Official Music Video, Fantastic Beasts and Where to Find Them, Quantum Break and Fifa all have in common? Facial motion capture.

Can you tell the difference between human and virtual human? Similar to the challenges that we have distinguishing between Deepfakes- named so after the deep learning required to design such antiques- and real images or recordings, the line is blurring between human and animation and the real and virtual.

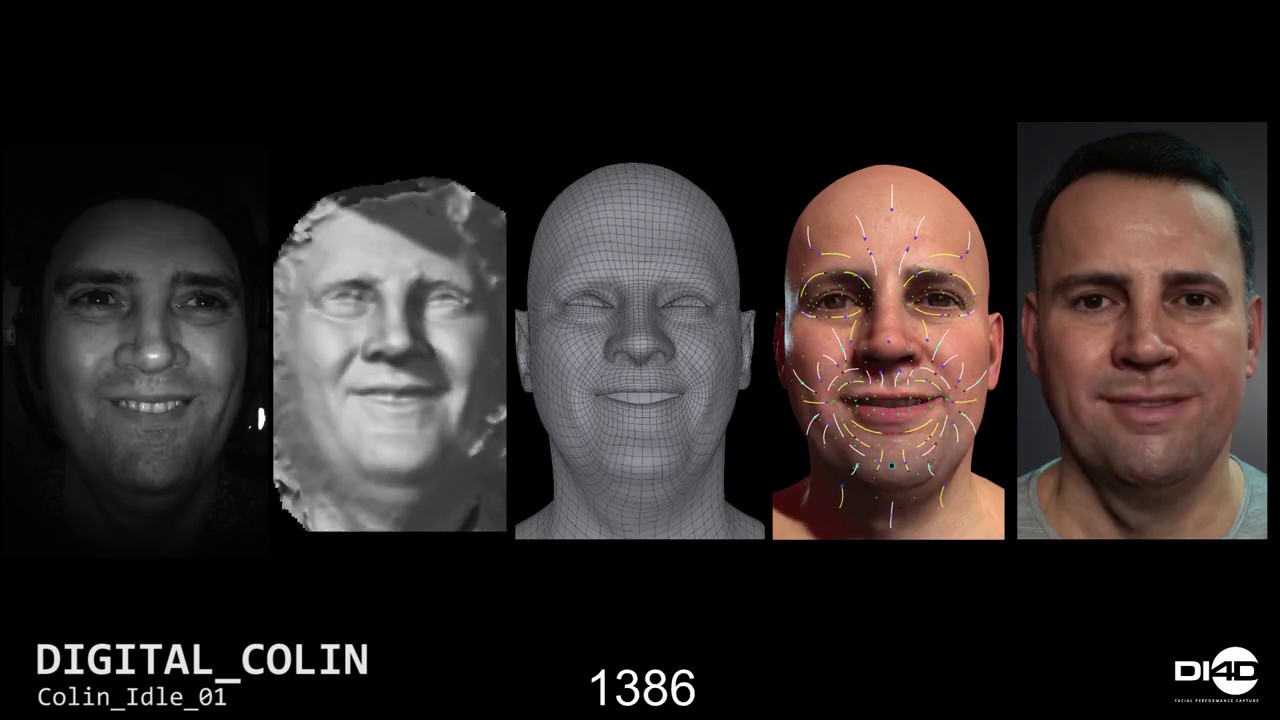

Dimensional Imaging, or DI4D, is a facial performance capture company that uses 4D capture with video cameras for facial animation. Essentially, this is a 3D scan for every frame of video, that uses a mesh technique to manage the thousands of data points.

EA has used the DI4D system for years to model Fifa players, making rounds to all the football (soccer) clubs to capture the player’s faces. This was first done with static 3D scanning with studio cameras. Then, 4D capture became available with video frequencies.

The minimum number of cameras required for 3D data is a stereo pair of cameras that function as our eyes do, capturing two pictures from slightly different viewpoints for depth perception. The DI4D pro system uses 9 cameras, or 3 sets of stereo cameras and three color cameras to also capture color and texture.

In the video below Colin Urquhart, CEO at Dimensional Imaging, demonstrates the Pro system. Using a video and mesh technique without any facial markers, an astounding amount of detail is brought to the 3D model of Urquhart’s face. Walking by one of their demo’s at a conference, this is the video that caught my attention, demonstrating DI4D’s work with Unreal Engine:

“There’s only so many marker dots you can put on a person’s face and once you get above 100 it starts to get pretty impractical. You’d have to sit in makeup for hours getting these marker dots on and then they get they get rubbed off during performance. So we don’t require that,” said Urquhart.

With all the variances in skin movement and expression, techniques that use basic markers only track facial landmarks. What about blinking, the details from subliminal movement of the skin that make up complex expressions and even culture specific expression variants? These are just a few of the associated variables.

For example, it took a 7,400 vert mesh to capture every nuance of Lorn Peta’s facial performance in Blade Runner 2049, as she replayed the young “Rachel’ in the original 1982 Blade Runner movie- all without any makeup or markers to track highly realistic facial animation.

After capturing the data for Blade Runner with DI4D’s system, Visual effects supervisor John Nelson worked on Rachael’s two-minute appearance in 2049 for a year. “It’s one thing to make a digital double look real, it’s another to make them perform and act,” said Nelson in an article by Entertainment, pointing to cheekbones, makeup, chin, eyes, and head tilting as essential to Rachael’s mannerisms.

“Once you’ve got the mesh, and we’ve got the selects then each operator can currently do maybe 20 seconds of data per day,” said Urquhart. While the tracking process is highly sophisticated, it still involves manual clean-up such as the area around the eyelid is tricky as the top eyelid can easily stick to the bottom lid.

It will not be long until simulations such as those in Blade Runner 2049 can become a reality in far less than a year. This type of facial animation data collection and deep learning combined is a fine example of machine learning. The more information that is gathered, the faster the process becomes.

Gaming, and even VR gaming, is still easy to skip into and out of with a clear distinction between realities, but detailed facial capture such as this is an indicator that there will soon be deeper ties and psychological effects from the digital 2D and 3D worlds we explore.

“We’re now able to get super realistic high fidelity facial animation in an engine for virtual reality applications. I think that’s a really exciting feature with a potential to make VR more personal. You can have characters that you can actually emotionally engage with because you can read their emotional state,” said Urquhart.

With advanced techniques and the potential for facial motion capture software to evolve at unprecedented rates, the evolution of digital humans and virtual characters isn’t due to be trapped in an ice age of the uncanny valley for long. Just one blink and the way we see digital experiences and virtual beings will change our interpretation of just how real the virtual can be.

By Anne McKinnon